ElevenLabs Conversational AI lets you create voice agents with cloned or synthetic voices. By pointing an ElevenLabs agent at Pria’s Chat Completions API as a Custom LLM, your Digital Twin becomes the brain behind a real-time voice experience — no additional backend code required.Documentation Index

Fetch the complete documentation index at: https://docs.praxis-ai.com/llms.txt

Use this file to discover all available pages before exploring further.

How It Works

ElevenLabs Agent

Handles voice input/output, speech-to-text, text-to-speech, and the real-time audio stream.

Chat Completions API

Receives OpenAI-compatible requests from ElevenLabs and routes them to your Digital Twin.

Digital Twin

Generates intelligent responses using its knowledge base, tools, assistants, and conversation memory.

Prerequisites

Before you begin, make sure you have:- An ElevenLabs account with access to Conversational AI Agents

- Created a voice in Eleven Labs and trained it

- A Praxis AI account with at least one Digital Twin configured

- Your Digital Twin’s Public ID (a UUID found in the administration panel)

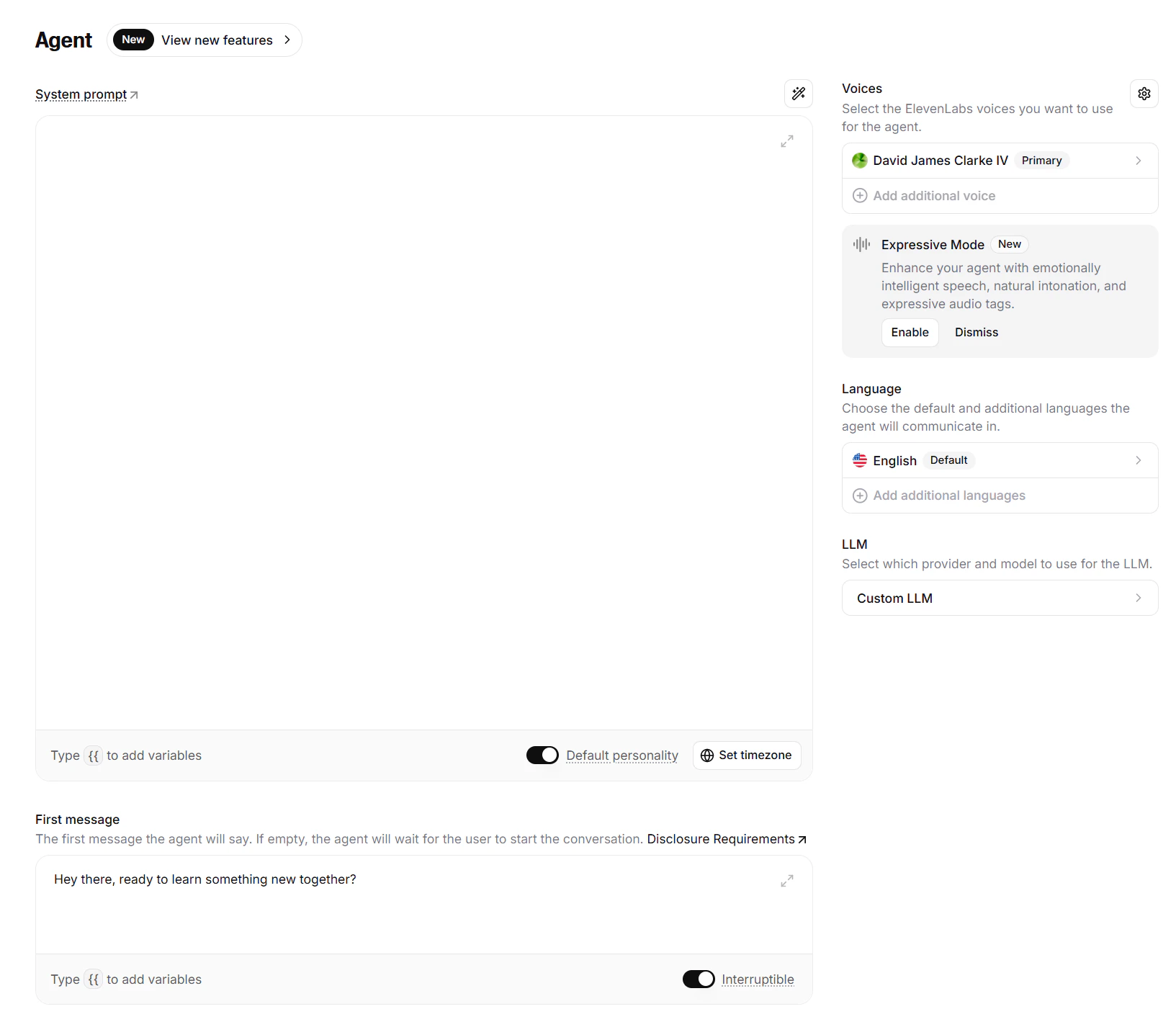

Step 1: Create an ElevenLabs Agent

Go to the ElevenLabs Dashboard

Navigate to Conversational AI in your ElevenLabs account and create a new agent.

Choose a voice

Select a stock voice, or use ElevenLabs’ voice cloning to create a custom voice that matches your Digital Twin’s persona.

Configure the system prompt

The Digital Twin manages its own system instructions server-side. Use the ElevenLabs system prompt for voice-specific behavior only (e.g., greeting style, conversation pacing).

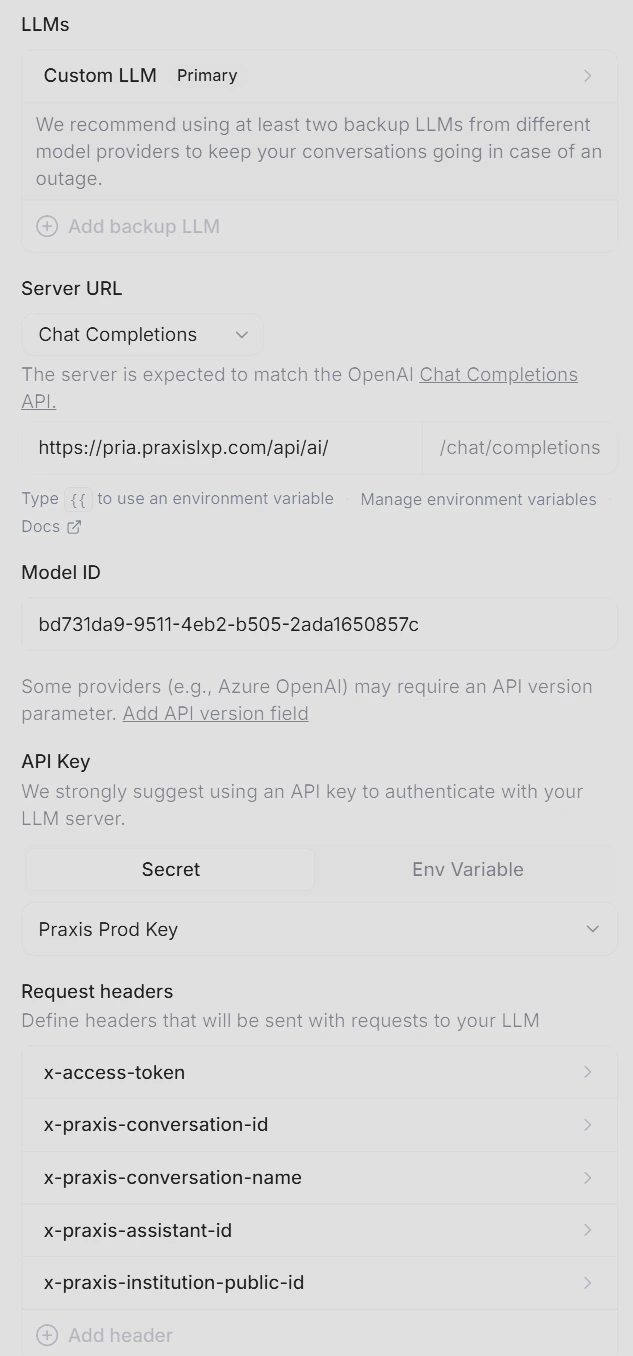

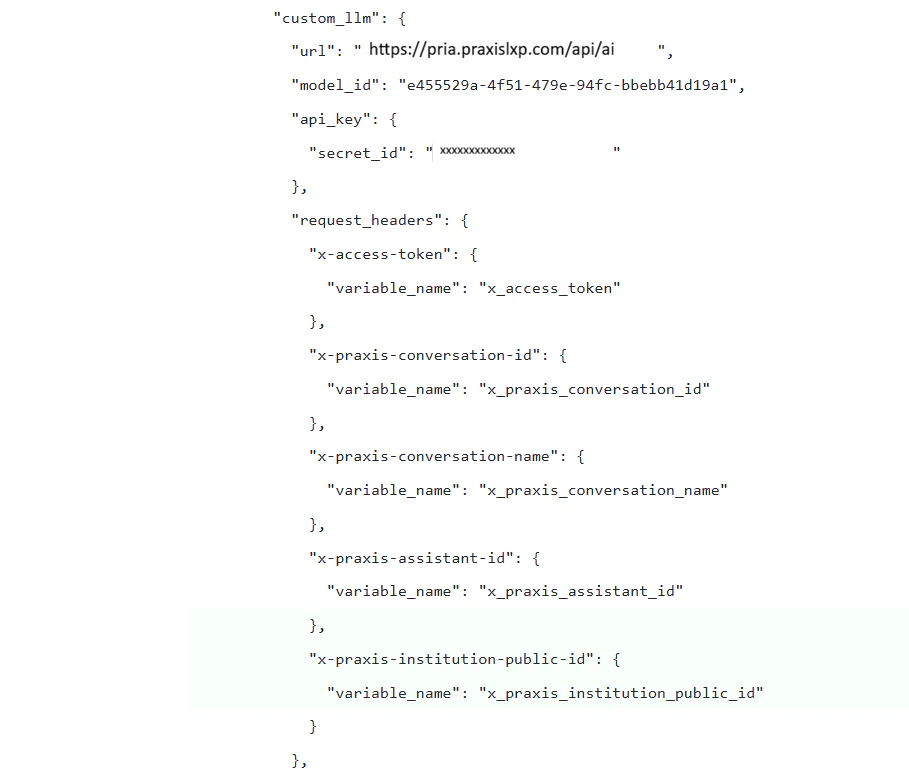

Step 2: Connect to Custom LLM

This is the core configuration — tell your ElevenLabs agent to use your Digital Twin as its language model.

Set the Model ID

Enter your Digital Twin’s Public ID as the model:

The Model ID maps directly to the Digital Twin Public ID. ElevenLabs will include this in every request as the

model parameter — exactly how the Chat Completions API expects it.Step 3: Configure Dynamic Variables

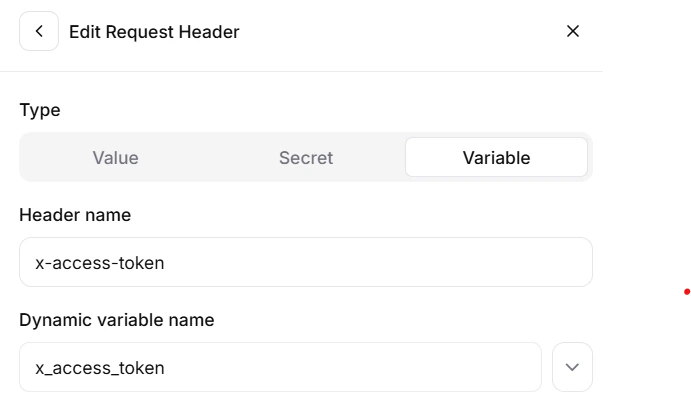

Dynamic variables let you pass user identity and conversation context from the client application through ElevenLabs to your Digital Twin. These are mapped to thex-praxis-* request headers that the Chat Completions API forwards to Praxis.

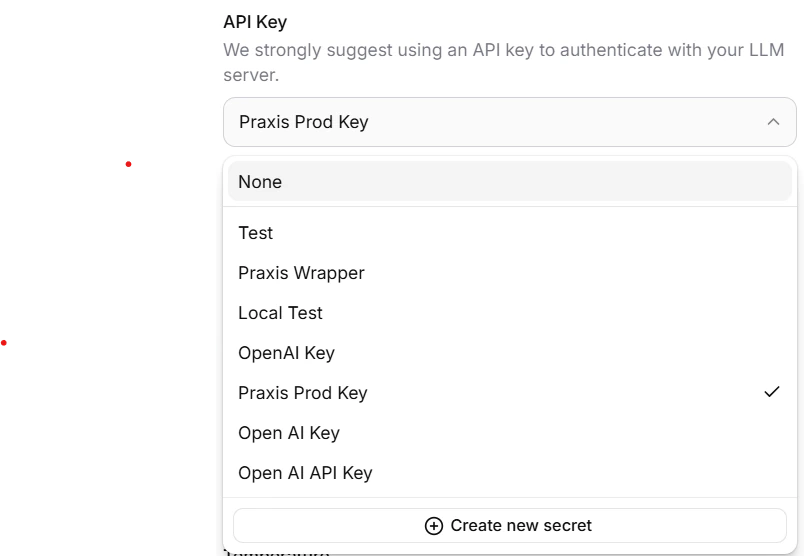

Define Variables in ElevenLabs

| Header | Description | Dynamic Variable |

|---|---|---|

x-access-token | Praxis JWT for user authentication | x_access_token |

x-praxis-conversation-id | Numeric conversation/course identifier | x_praxis_conversation_id |

x-praxis-conversation-name | Human-readable conversation name | x_praxis_conversation_name |

x-praxis-assistant-id | Assistant to execute during the conversation | x_praxis_assistant_id |

x-praxis-institution-public-id | Override the target Digital Twin when using the voice agent for multiple Twins | x_praxis_institution_public_id |

How Variables Flow

elevenlabs_extra_body:

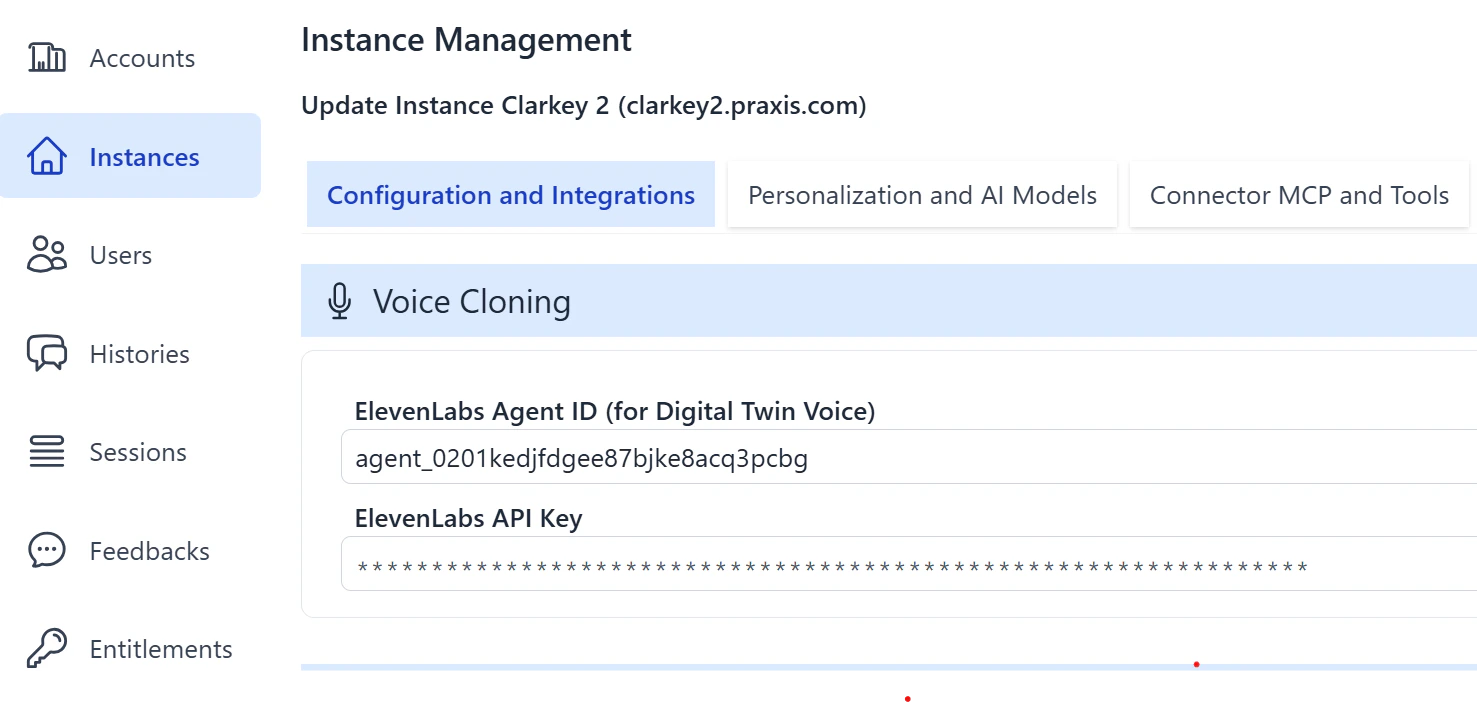

Step 4: Configure Your Digital Twin

After setting up the ElevenLabs agent, you must configure your Digital Twin in Praxis to accept requests from ElevenLabs.

Set the ElevenLabs Agent ID

Enter the Agent ID from your ElevenLabs Conversational AI agent. This is the identifier shown in your ElevenLabs dashboard under the agent settings.

Set the ElevenLabs API Key

Enter the same API Key you configured in Step 2. This allows the Chat Completions API to validate inbound requests from ElevenLabs.

See Configuration — ElevenLabs for full details on the credential fields (Agent ID and API Key).

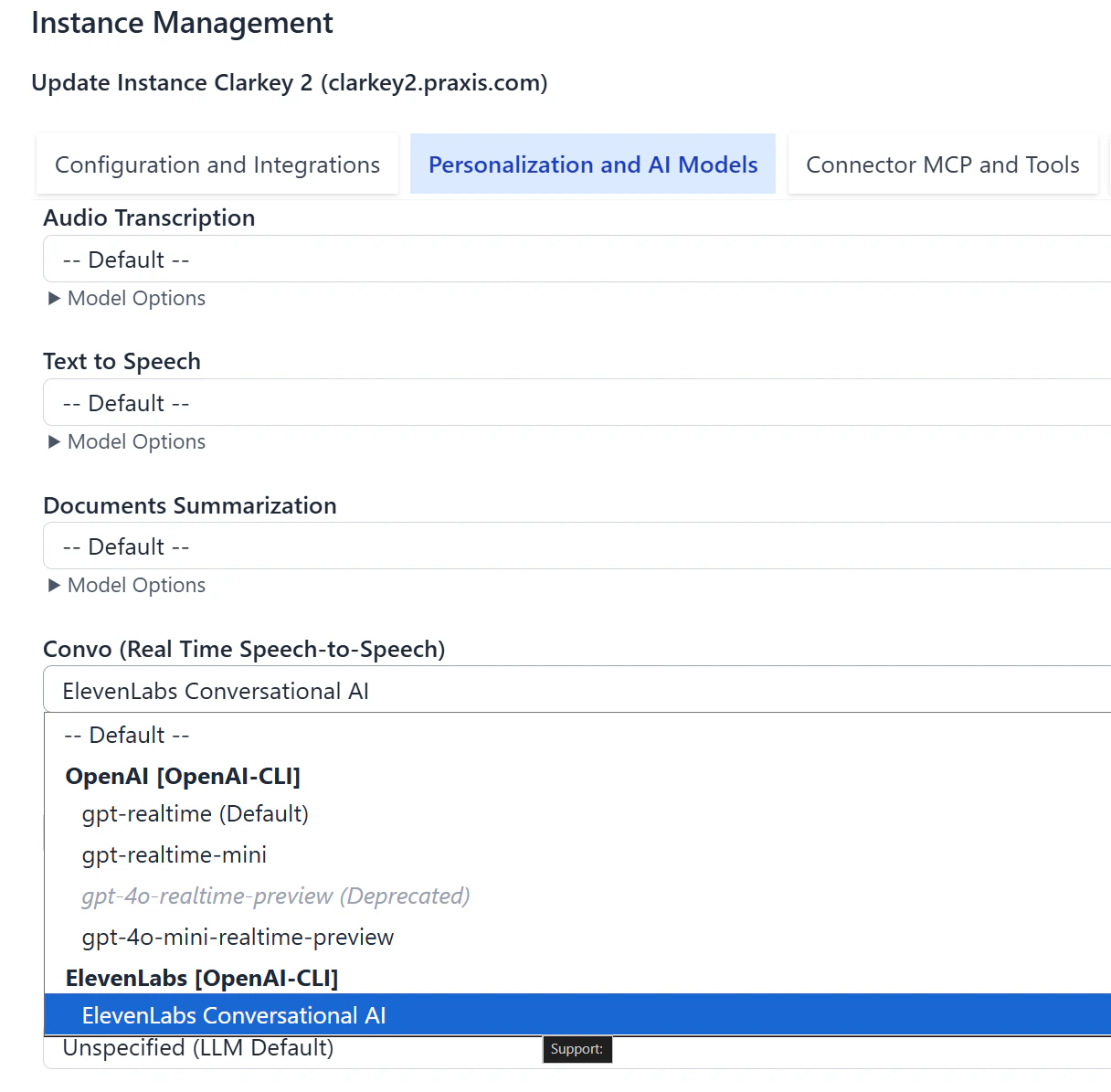

Step 5: Use ElevenLabs for Convo Mode

Once the ElevenLabs agent is configured in your Digital Twin, you can select ElevenLabs as the voice provider for Pria’s built-in Convo Mode (speech-to-speech).

Open Personalization

In the Admin dashboard, select your Digital Twin instance and navigate to the Personalization tab.

Change the Convo Mode model

Under Convo Mode, select ElevenLabs from the list of supported voice providers.

Voice selection is not available in ElevenLabs Convo Mode. The voice used is the one configured in your agent’s settings on the ElevenLabs dashboard. To change the voice, update it in your ElevenLabs agent configuration.

Connection Method

ElevenLabs supports two connection methods for the audio stream between the browser and ElevenLabs servers:| Method | Description |

|---|---|

| WebRTC (default) | Low-latency peer-to-peer connection. Best for most environments. |

| WebSocket | Server-relayed connection. Use when WebRTC is blocked by firewalls or restrictive network policies. |

Step 6: Deploy Client Widgets (optional)

ElevenLabs provides multiple ways to embed a voice agent in your application. Each method supports passing dynamic variables at session start.HTML Widget

The simplest option — add two lines to any web page:React Integration

For React applications, use the@elevenlabs/react package with dynamic variables:

JavaScript SDK (Vanilla)

For non-React applications, use the@elevenlabs/client SDK directly:

Securing Private Agents

For production deployments, use signed URLs to prevent unauthorized access to your ElevenLabs agent. You can also configure an allowlist of approved domains in your agent’s Security tab to restrict where the widget can be embedded.Full Example: Embeddable Support Widget

A complete React component that authenticates with your backend, starts a voice session with a Digital Twin, and displays the conversation transcript:Troubleshooting

| Symptom | Cause | Fix |

|---|---|---|

| Agent responds but has no knowledge | Model ID is wrong | Verify the Digital Twin Public ID in the LLM model field |

| 401 Unauthorized from Chat Completions API | Missing or expired JWT | Ensure x_access_token dynamic variable contains a valid Praxis JWT |

| No audio from agent | Microphone not granted | Check browser permissions; call getUserMedia before startSession |

| Agent is silent after connecting | ElevenLabs can’t reach your API | Verify the Custom LLM server URL is publicly accessible |

| ”LiveKit v1” 404 in console | Normal SDK behavior | Benign version negotiation — safe to ignore |

Related

- Chat Completions API — Set up the OpenAI-compatible endpoint that powers this integration

- Web SDK — Embed the full Digital Twin UI (text + voice) in your app

- ElevenLabs Custom LLM Docs — Official ElevenLabs configuration reference

- ElevenLabs Dynamic Variables — How variables flow through the agent