Documentation Index

Fetch the complete documentation index at: https://docs.praxis-ai.com/llms.txt

Use this file to discover all available pages before exploring further.

Connectors for MCP (Model Context Protocol) are currently in Beta. Features and compatibility may change as the protocol evolves.

Overview

Praxis middleware empowers you to define and deploy connectors that seamlessly link Pria, your digital assistant, to hundreds of thousands of remote MCP (Model Context Protocol) servers.What is MCP?

MCP is an open standard designed to integrate external applications with AI models—think of it as the universal “USB-C port” for AI ecosystems. By utilizing remote MCP connectors, Praxis leverages OpenAI’s Server-Sent Events (SSE) to establish live, two-way communication between Pria and virtually any external service, database, or API.Expanded Capabilities

Your digital twin’s reach and intelligence are no longer confined to its internal capabilities. Instead, it can interact dynamically with a vast landscape of external systems, including:- Business applications (CRM, ERP, project management)

- Databases (SQL, NoSQL, data warehouses)

- APIs (REST, GraphQL, webhooks)

- Cloud services (AWS, Azure, Google Cloud)

- Custom applications and proprietary systems (Salesforce, Hubspot, Whatsapp, Slack, etc.)

Key Benefits

Unified Integration

Tap into any external business logic, data service, or proprietary application seamlessly

Workflow Automation

Automate cross-platform workflows and eliminate manual processes

Break Down Silos

Unify fragmented services without complex custom integrations

AI-Powered Solutions

Unlock new AI capabilities by connecting to specialized external tools

Prerequisites

Supported Models

- OpenAI GPT-5 series (with MCP support)

- Other MCP-compatible models (check model documentation)

Configuration

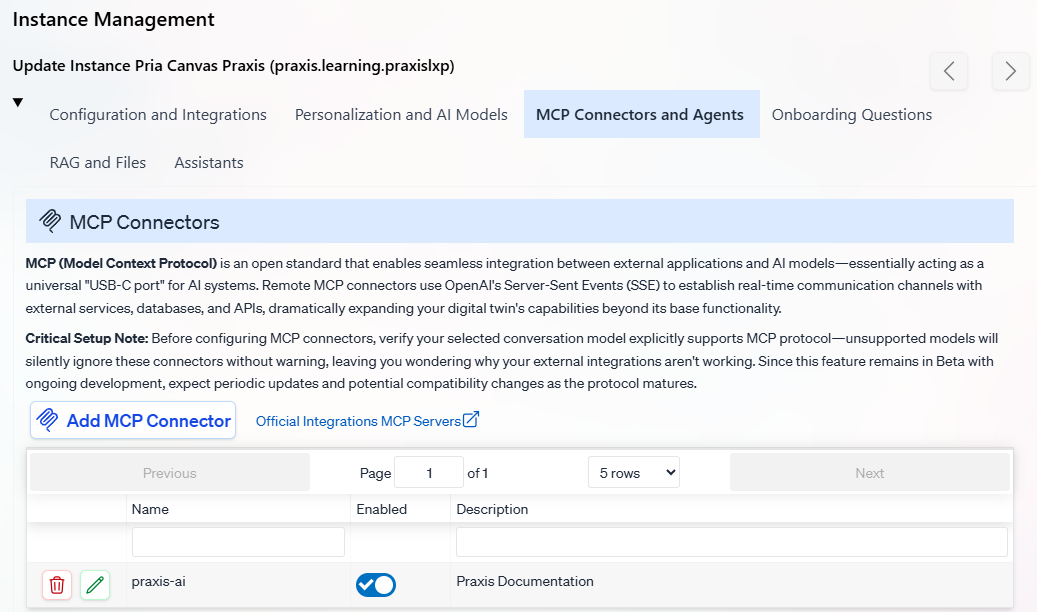

Navigate to your instance Edit → MCP Connectors and Tools panel to manage your connectors.Connector List View

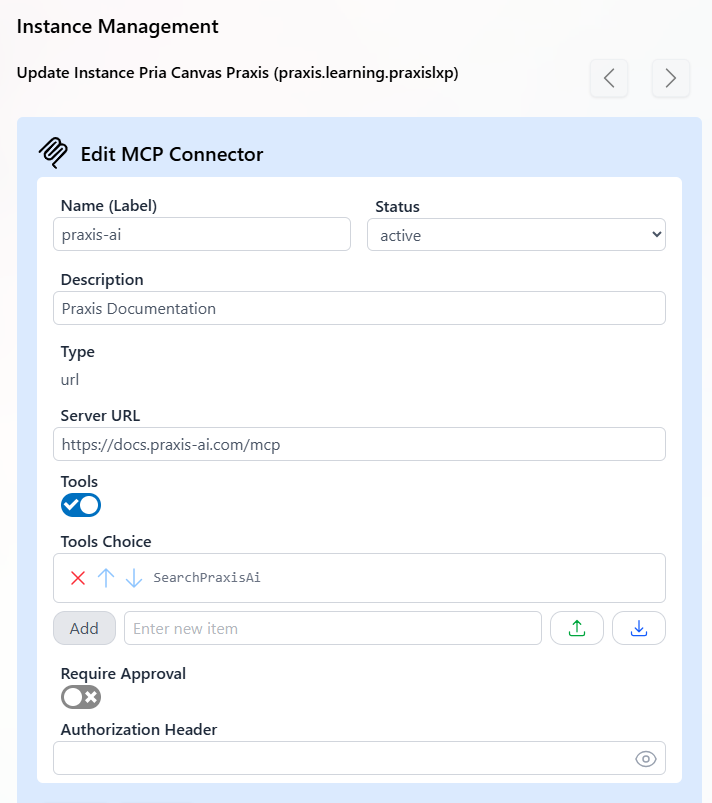

Creating/Editing Connectors

Required Fields

Connector Name (Label): Must match your MCP Server Label exactly

Status:

Active- Connector is enabled and available to the Digital TwinInactive- Connector is disabled

Description: A description of your MCP server’s purpose, sent to the AI model as context. Use this to help the AI understand when and how to use the server’s tools.Example:

"This server provides access to the company's CRM. Use it to look up customer records, update contact information, and create support tickets."Type: Communication method

url- URL-based communication (currently the only supported method)

Server URL: The endpoint of your remote MCP serverExample:

https://docs.praxis-ai.com/mcpTool Management

Tools Filter: Enable to select a subset of available tools

Recommended: Enable this option when your MCP server has many tools. Some servers may expose hundreds of tools, so filtering helps optimize performance and focus functionality.

Selected Tools: List of specific tool names to enable

Approval Settings

Requires Approval: When enabled, the LLM requests user permission before using MCP tools

Recommendation: Leave disabled for most use cases to maintain smooth user experience

Ignore Approval for Tools: List of tools that bypass the approval step when approval is requiredUse this for frequently-used, low-risk tools like search or read-only operations.

Authentication

Authorization Header: Service-level authentication tokenFormat:

Bearer xyz123...Best Practices

Security Considerations

Security Considerations

- Use service accounts with minimal required permissions

- Regularly rotate authorization tokens

- Monitor MCP server access logs

- Implement rate limiting on your MCP servers

Performance Optimization

Performance Optimization

- Enable tool filtering for servers with many available tools

- Use descriptive connector names for easy management

- Test connectors in development before production deployment

- Monitor response times and adjust timeouts as needed

Troubleshooting

Troubleshooting

- Verify model MCP compatibility before deployment

- Check authorization headers and server URLs

- Ensure tool names match exactly (case-sensitive)

- Monitor server logs for connection issues

Next Steps

Read on Model Context Protocol

Read on Model Container Protocol

Search through existing MCP Servers

Search Existing MCP server

Develop on hoster platforms

Develop MCP servers on hosted platforms like smithery.ai zapier.com

Praxis AI is also an MCP Server

Connect Claude, ChatGPT, or your favorite LLM to Praxi-AI